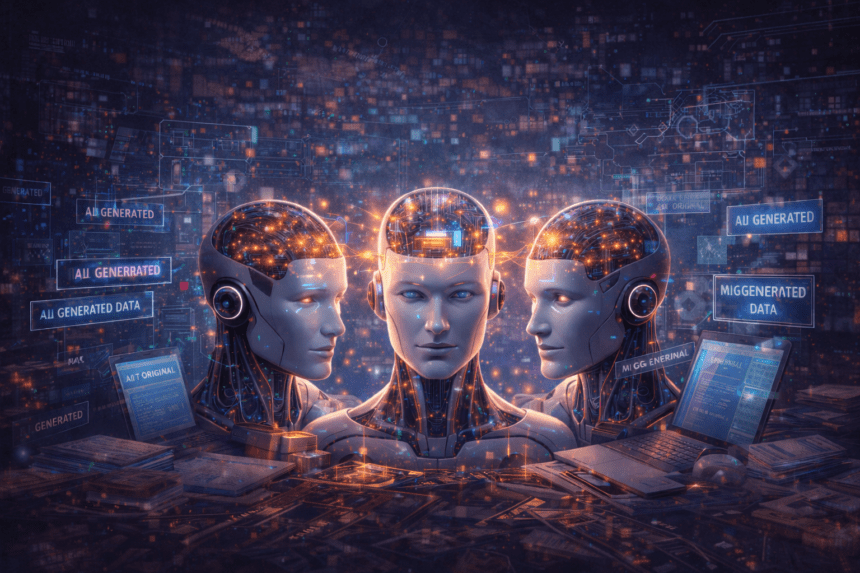

The concern around AI sameness has real research behind it

A opinion, AI “hive mind” is forming and that it is making people dumber. The headline is provocative, but the underlying issue is not invented. There is credible research behind the fear that large language models can flatten originality, recycle dominant patterns, and reinforce the same kinds of answers over time. What is less clear is whether that process is already making humans cognitively weaker in any measurable, settled way.

That distinction matters.

So the strongest version of this story is not “science proves AI is making us dumber.” The stronger, more defensible version is this, researchers are increasingly warning that AI can homogenize outputs, reduce diversity in generated content, and create feedback loops if synthetic content keeps feeding future models.

At the center of the debate is a now widely cited 2024 Nature paper on “model collapse.” The paper found that when generative models are trained recursively on their own outputs, they can begin to lose information about the real world, especially rare or less common patterns. Over time, that can produce degraded, narrower, and less faithful outputs. Nature’s coverage of the issue put it more plainly: too much AI-generated data in training pipelines can make models forget the unusual edges of reality.

That is one half of the AI hive mind problem.

The other half is what happens on the user side. A 2025 open-access study in Computers in Human Behavior: Artificial Humans examined the “homogenizing effect” of large language models on creative diversity. The researchers found that while AI assistance could improve the novelty of writing for individual users, widespread use of the same systems risked reducing the collective diversity of ideas. In plain English: AI may help one person sound better, while making everyone sound a bit more alike.

That is where the hive mind language starts to make sense.

Large language models are built to predict likely continuations from enormous datasets. That makes them powerful, but it also makes them pattern-seeking machines. They often converge on fluent, plausible, highly repeatable answers. That can be useful when the goal is speed, summarization, or consistency. It becomes less useful when the goal is originality, dissent, edge-case thinking, or genuinely new synthesis. The risk is not that every model says the exact same thing every time. The risk is that they increasingly orbit the same center of gravity.

There is also a structural reason this could worsen.

As AI-generated content floods the web, future models may end up training on a growing pool of machine written text rather than human-created material. Researchers have been warning that this recursive loop can degrade quality and compress variety. That does not just affect future models. It also affects the information environment human beings read, cite, repost, and absorb. If the internet fills with increasingly standardized AI output, then both machines and people may be drawing from a narrower river of language and ideas.

But this is the point where the story needs discipline.

There is a real difference between “AI may encourage homogenization” and “AI is making us dumber.” The sources I checked support the first claim much more strongly than the second. I did not find a primary source establishing a scientific consensus that AI use is directly lowering human intelligence. What I did find was a growing body of concern around reduced diversity, recursive contamination of training data, and the possibility that overreliance on polished machine outputs could weaken habits of original thought. That is a serious warning, but it is still a warning, not a final verdict.

In practice, the real world effect may depend less on the models than on how humans use them.

Used well, AI can accelerate brainstorming, research, drafting, coding, and synthesis. Used lazily, it can become a substitute for wrestling with hard ideas. That is the real cultural fault line. If people treat AI as a co-pilot, it may expand productivity without crushing judgment. If people outsource too much of the thinking process itself, the result could be a world full of cleaner prose, faster summaries, and thinner reasoning.

That is why the AI hive mind story matters.

The danger is not just bad outputs. It is the slow normalization of median thinking at industrial scale. If models keep learning from models, and humans keep leaning on the same model-shaped answers, the internet could become more polished while becoming less surprising. The sharpest concern is not that AI will suddenly make humanity stupid. It is that it may make blandness feel smart, repetition feel authoritative, and consensus feel deeper than it really is.

So yes, there is a real story here.

The “AI hive mind” is a credible concern backed by emerging research on homogenization and model collapse. But the claim that AI is definitively “making us dumber” goes beyond what the strongest evidence currently shows. For now, the better headline is this: AI may be making digital culture more uniform, and if humans stop pushing back with originality, that could become a much bigger problem than most people realize.