When social media becomes the front line of modern conflict

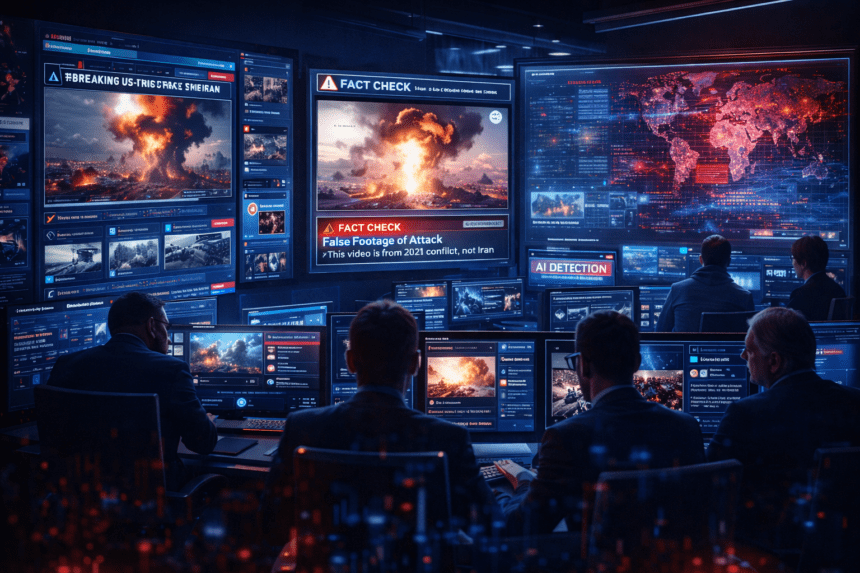

Modern war is no longer fought only with missiles, aircraft, and soldiers. It is also fought with information, narratives, and viral posts. In the hours following a major U.S. and Israeli military operation targeting Iran, the online platform X, formerly known as Twitter, became overwhelmed by misleading posts, recycled footage, AI generated images, and outright fabricated claims about the conflict. According to investigations by journalists and digital researchers, the platform quickly filled with misleading narratives about the scale of the attacks, the targets involved, and the consequences on the ground.

The situation highlights a larger and increasingly dangerous reality. In the age of social media and artificial intelligence, information itself has become a weapon. Governments, activists, propagandists, opportunistic influencers, and even automated bots compete to shape public perception during crises. The result can be an overwhelming stream of contradictory claims that make it difficult for the public to understand what is actually happening.

This article explores the surge of disinformation that followed the reported strikes on Iran, why platforms like X are particularly vulnerable during global crises, how modern misinformation campaigns operate, and what this trend means for journalism, democracy, and global security.

The Immediate Explosion of False Claims

Within minutes of the announcement of the military operation, social media feeds began to fill with dramatic videos, screenshots, and claims of explosions across multiple Iranian cities. Some posts appeared to show missile strikes, aircraft being shot down, or large scale destruction. Many of these posts quickly accumulated millions of views.

However, closer inspection revealed that much of the content was misleading or entirely false. Journalists analyzing hundreds of viral posts found that some videos were actually months or years old, taken from unrelated conflicts or training exercises. Others had been attributed to the wrong location entirely. In several cases, images appeared to be altered or even generated using artificial intelligence tools.

Another recurring pattern involved video game footage. Clips from military simulation games such as Arma 3 have repeatedly circulated online as supposed real combat footage during geopolitical crises. In one case, gameplay footage was misrepresented as real air combat during the Iran conflict before being debunked.

This phenomenon is not new, but it is becoming increasingly common as high quality graphics and editing tools make it easier to blur the line between fiction and reality.

The Incentive Structure Behind Viral Misinformation

One reason misinformation spreads rapidly on platforms like X is the economic and social incentives embedded in the platform’s design. The platform allows users to monetize content based on engagement, meaning posts that generate views and interactions can produce financial rewards.

During major breaking news events, dramatic or sensational content tends to spread faster than careful reporting. A shocking video claiming to show a major explosion may go viral within minutes, while fact checking and verification often take hours or days.

This creates a feedback loop where users who want attention, influence, or revenue have an incentive to post unverified information quickly. The result is an ecosystem where misinformation spreads faster than corrections.

Researchers studying social media behavior have also found that engagement metrics such as likes, shares, and comments can amplify misinformation, making users more likely to trust viral posts even when their credibility is questionable.

The Role of Blue Check Accounts and Influencers

Another factor contributing to the spread of misleading information is the role of high visibility accounts. Many viral posts during the conflict came from accounts with large followings or paid verification badges.

These accounts often act as amplifiers. When a user with hundreds of thousands or millions of followers shares a video or claim, it gains credibility in the eyes of many viewers even if the information is incorrect.

Investigations into the disinformation surge found that several widely shared posts contained misidentified footage or exaggerated claims, yet still accumulated enormous audiences before being corrected.

This dynamic is particularly dangerous during conflicts because misinformation can influence public perception, diplomatic relations, and even military decisions.

AI Generated Content Enters the Battlefield

The rise of generative artificial intelligence has added a new dimension to the problem. AI tools can now create convincing images, videos, and voice recordings that appear realistic to casual observers.

In recent conflicts, analysts have detected AI generated satellite imagery and fabricated battlefield visuals circulating widely on social media. These manipulated images can claim to show destroyed military installations or fabricated attacks that never occurred.

Because satellite images were once considered highly reliable evidence, AI manipulation of these images represents a significant challenge for verification efforts.

The increasing sophistication of generative AI means that distinguishing between authentic and fabricated media is becoming more difficult even for experts.

The Information War Dimension

Disinformation during wartime is rarely random. In many cases it forms part of broader information warfare campaigns conducted by governments, intelligence services, or political actors.

Throughout modern conflicts, competing sides attempt to shape public opinion by spreading narratives that support their strategic goals. These narratives may exaggerate military successes, downplay losses, or portray opponents as weaker than they actually are.

Researchers studying recent conflicts have found that coordinated networks of accounts can amplify propaganda narratives, sometimes using thousands of automated bots to increase visibility.

The result is an online environment where multiple competing narratives circulate simultaneously, making it difficult for observers to separate fact from propaganda.

Internet Blackouts and the Verification Challenge

The challenge of verifying information becomes even more complicated when governments restrict internet access during conflicts. In Iran, authorities have previously implemented internet shutdowns that dramatically reduce communication with the outside world.

During such blackouts, journalists and investigators often rely on satellite imagery, encrypted messaging, and indirect sources to confirm events on the ground. With connectivity sometimes reduced to only a fraction of normal levels, reporting becomes slower and more dangerous.

When reliable information from inside a conflict zone is limited, misinformation circulating on social media can fill the vacuum.

Historical Patterns of War Disinformation

The explosion of misinformation during the Iran strikes is part of a broader historical pattern. Similar waves of false or misleading content have accompanied numerous recent conflicts.

During the Gaza war, analysts documented tens of thousands of fake or coordinated social media accounts spreading propaganda and misleading claims about battlefield events.

In many cases, the narratives promoted online were tailored for different audiences. Some were designed to influence domestic populations, while others targeted international audiences.

This phenomenon has led some researchers to describe modern conflicts as “information wars” fought simultaneously with traditional military operations.

Why Social Media Platforms Struggle to Respond

Social media companies face significant challenges when trying to moderate misinformation during rapidly evolving events.

First, the sheer volume of posts makes real time verification extremely difficult. Millions of users can upload videos, photos, and commentary within minutes of a breaking event.

Second, distinguishing between legitimate eyewitness footage and manipulated media often requires detailed analysis, including geolocation, metadata inspection, and cross referencing with other sources.

Third, moderation policies must balance the need to remove harmful misinformation with the principle of free expression.

As a result, many platforms rely on community driven correction systems, such as context notes or fact checking labels, to add warnings to misleading posts.

However, these corrections often appear only after the original post has already gone viral.

The Role of Journalists and Open Source Investigators

In response to the growing misinformation problem, a new community of open source investigators and digital verification experts has emerged.

Organizations such as Bellingcat, major newsrooms, and independent analysts use tools such as satellite imagery analysis, reverse image searches, and geolocation techniques to verify the authenticity of online content.

These investigators look for clues such as building layouts, weather patterns, shadow angles, and landmarks that can confirm where and when a video was recorded.

Even with these tools, verification remains a complex process. In fast moving crises, accurate information often arrives more slowly than misinformation.

The Psychological Impact of Viral Disinformation

Beyond the technical and political implications, disinformation during conflicts can have profound psychological effects on the public.

Images and videos that appear to show massive destruction or casualties can trigger fear, anger, and panic even if they are false.

For people living in affected regions, misleading information about attacks or evacuation routes can create real danger.

Researchers have also found that repeated exposure to conflicting information can lead to information fatigue, where audiences become uncertain about what to believe.

This uncertainty can erode trust not only in social media but also in journalism and public institutions.

The Future of Information Warfare

The events following the U.S. and Israeli strikes on Iran illustrate how quickly digital information ecosystems can become chaotic during geopolitical crises.

As artificial intelligence continues to improve and social media platforms remain central to global communication, the scale and sophistication of misinformation campaigns will likely increase.

Future conflicts may involve coordinated efforts combining AI generated media, automated bot networks, targeted propaganda, and psychological operations.

This raises important questions about how societies can defend against information manipulation while preserving open communication.

Toward a More Resilient Information Ecosystem

Addressing the misinformation challenge requires cooperation among governments, technology companies, journalists, researchers, and citizens.

Potential strategies include improved transparency from platforms, stronger fact checking infrastructure, better digital literacy education, and advanced detection systems for AI generated media.

Ultimately, however, the most important defense may be critical thinking among users themselves.

In a world where anyone can publish content to a global audience instantly, the ability to question and verify information has become an essential skill.

Conclusion

The surge of misinformation on X following the reported strikes on Iran demonstrates how modern conflicts extend far beyond the battlefield.

Within minutes of the first military reports, social media feeds were filled with recycled videos, AI generated images, and misleading claims about the scale and consequences of the attacks.

This phenomenon reflects a broader transformation in how information circulates during crises. Social media platforms have become arenas where narratives compete for dominance, often amplified by algorithms, influencers, and automated networks.

As technology continues to evolve, the line between reality and digital fabrication will become increasingly difficult to detect.

Understanding this new landscape is essential not only for journalists and policymakers but for anyone trying to make sense of global events in the information age.

The battlefield of the future may still involve aircraft and missiles. But it will also be fought in timelines, comment sections, and viral posts.